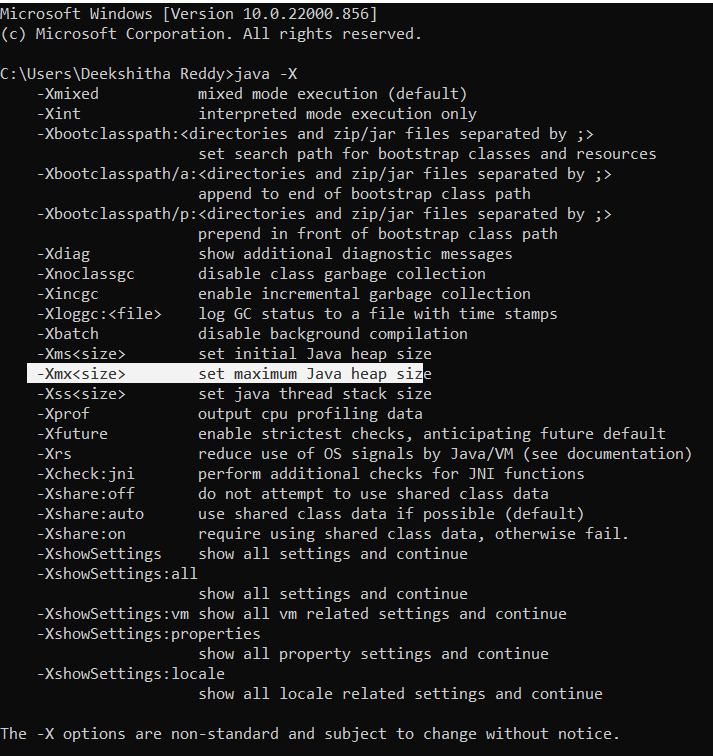

Now if you need to reduce to overhead you would like to understand where it actually disappears. Subtract the heap and permgen sizes from the real consumption and you see the overhead posed. Measuring the additional overhead is trivial – just monitor the process with the OS built-in tools ( top on Linux, Activity Monitor on OS X, Task Manager on Windows) to find out the real memory consumption. So you need to run your application with loads similar to production environment and measure it.ĭid you know that 20% of Java applications have memory leaks? Don’t kill your application – instead find and fix leaks with Plumbr in minutes. Your best friend is again the good old trial and error. The JVM overhead can range from anything between just a few percentages to several hundred %. But is there a way to predict how much memory is actually going to be needed? Or at least understand where it disappears in order to optimize?Īs we have found out via painful experience – it is not possible to predict it with reasonable precision. You can end up using memory for other reasons than listed above as well, but I hope I managed to convince you that there is a significant amount of memory eaten up by the JVM internals. This is unlimited and in a native part of the JVM. If you are an early adopter of Java 8, you are using metaspace instead of the good old permgen to store class declarations. When you are using native code, for example in the format of Type 2 database drivers, then again you are loading code in the native memory. If you happen to use off-heap memory, for example while using direct or mapped ByteBuffers yourself or via some clever 3rd party API then voila – you are extending your heap to something you actually cannot control via JVM configuration. Again, to know which parts to optimize it needs to keep track of the execution of certain code parts. Java Virtual Machine optimizes the code during runtime. G1 is especially known for its excessive appetite for additional memory, so be aware of this. So this is one part of the memory lost to this internal bookkeeping. In order for the garbage collector to know which objects are eligible for collection, it needs to keep track of the object graphs. As you might recall, Java is a garbage-collected language. The need for it derives from several different reasons: In short, you can predict your application memory usage with the following formula Max memory = + + number_of_threads * īut besides the memory consumed by your application, the JVM itself also needs some elbow room. So in order to limit those you should also specify the -XX:MaxPermSize and -Xss options respectively.

What you have specified via the -Xmx switches is limiting the memory consumed by your application heap.īesides heap there are other regions in memory which your application is using under the hood – namely permgen and stack sizes. But in 99% of the cases it is completely normal behaviour by the JVM. So what the heck is happening under the hood? Why is the process consuming more memory than you allocated? Is it a bug or something completely normal? Bear with me and I will guide you through what is happening.įirst of all, part of it can definitely be a malicious native code leaking memory. Either by yourself when checking a process table in your development / test box or if things got really bad then by operations who calls you in the middle of the night telling that the 4G memory you had asked for the production is exhausted.

And then you were in for a nasty surprise. You have added -Xmx option to your startup scripts and sat back relaxed knowing that there is no way your Java process is going to eat up more memory than your fine-tuned option had permitted.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed